|

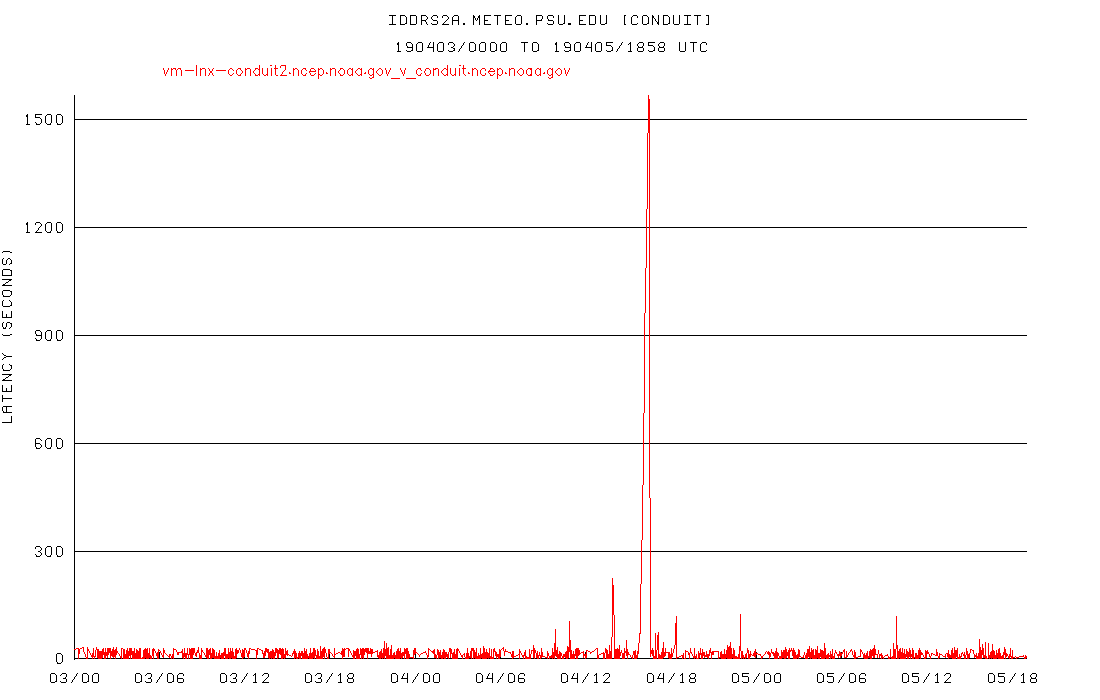

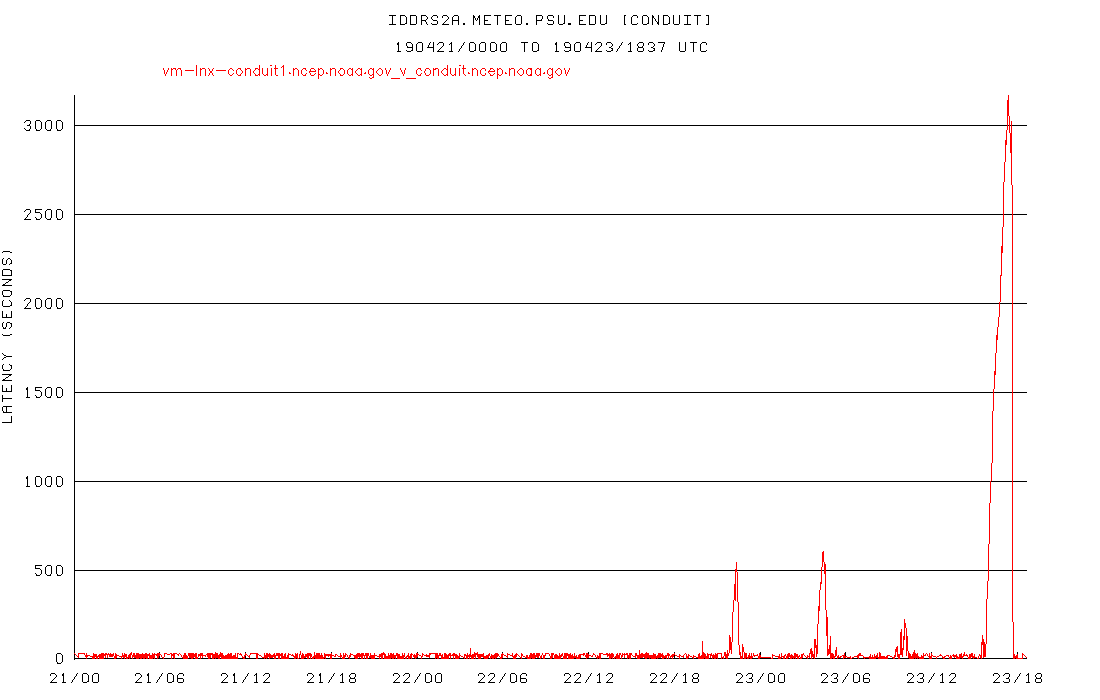

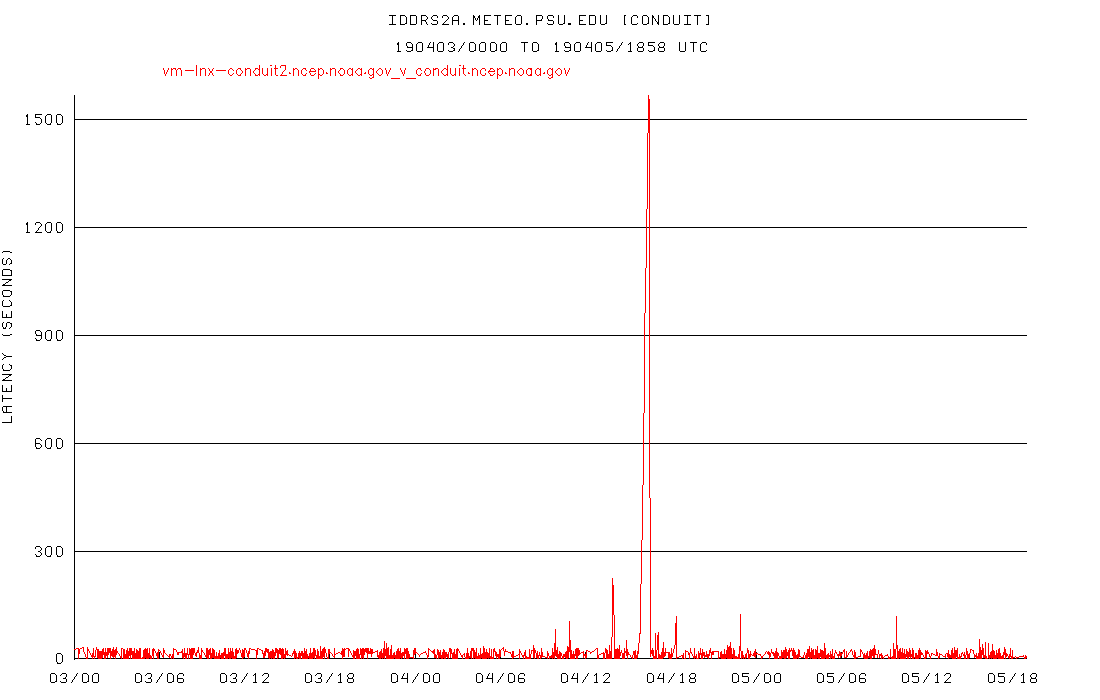

I'm still on the 10 way split that I've been on for quite some time, and without my changing anything, our lags got much much better starting on Friday, 4/19 starting with the 12 UTC model sequence. I don't know if this correlated to Unidata switching to a

20 way split or not, but that happened around the same time.

Here are my lag plots, the first ends 04 UTC 4/20, and the second just now at 19 UTC 4/23. Note the Y axis on the first plot goes to ~3600 seconds, but on the second plot, only to ~100 seconds.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Person, Arthur A. <address@hidden>

Sent: Tuesday, April 23, 2019 1:49 PM

To: Pete Pokrandt; Gilbert Sebenste

Cc: Kevin Goebbert; address@hidden; _NCEP.List.pmb-dataflow; Mike Zuranski; Derek VanPelt - NOAA Affiliate; Dustin Sheffler - NOAA Federal; address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

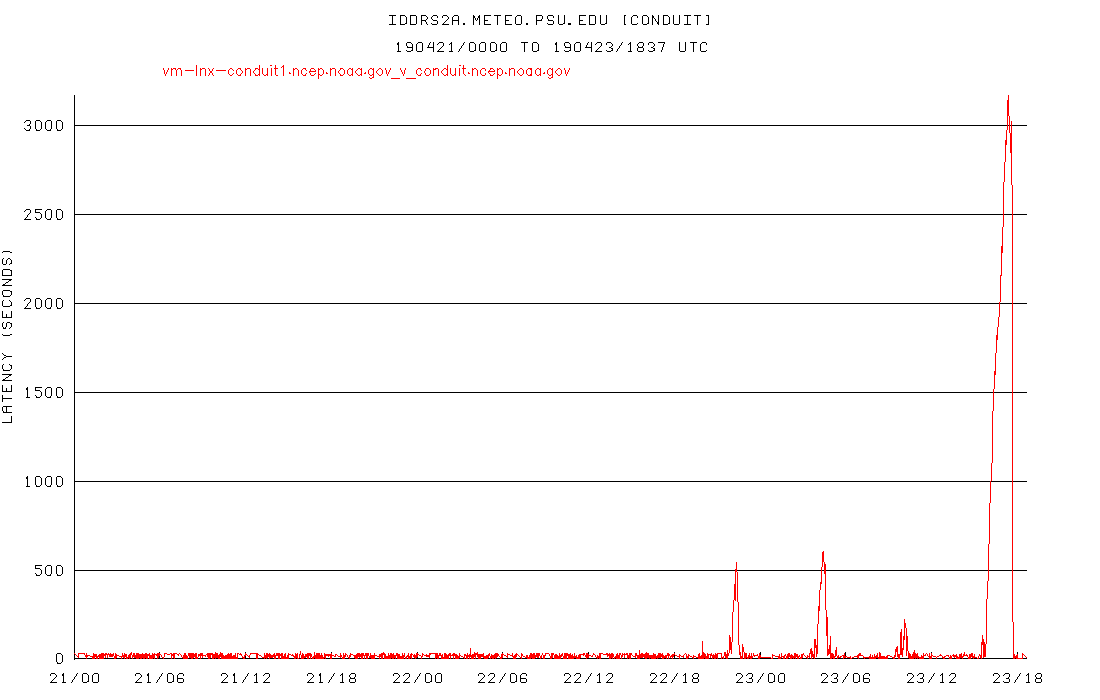

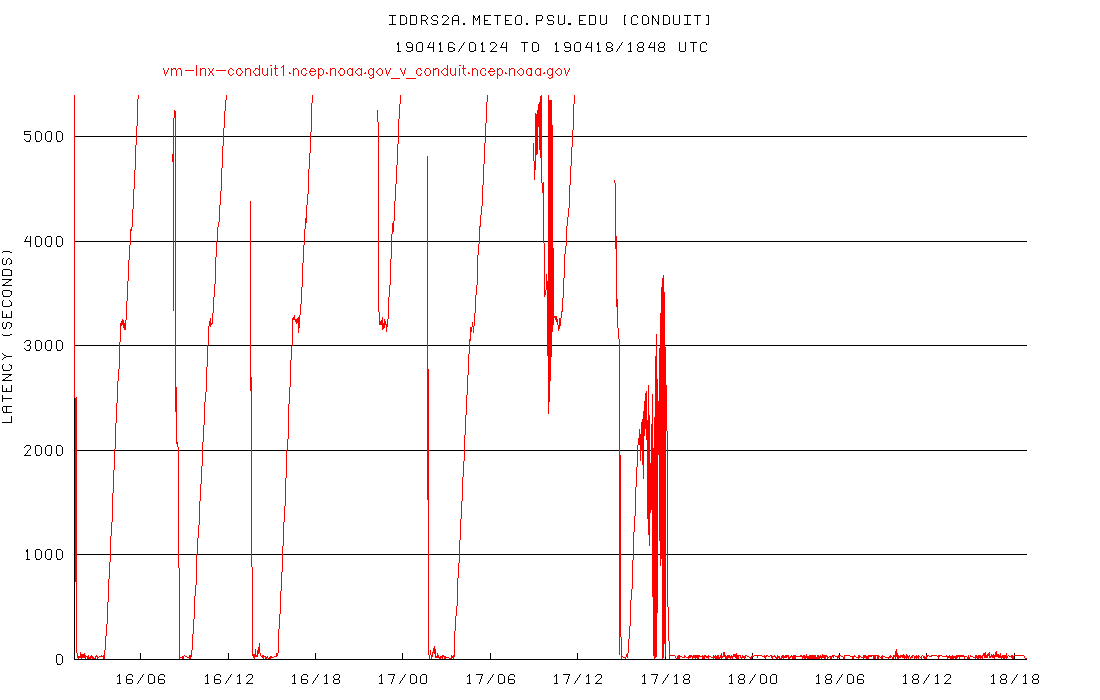

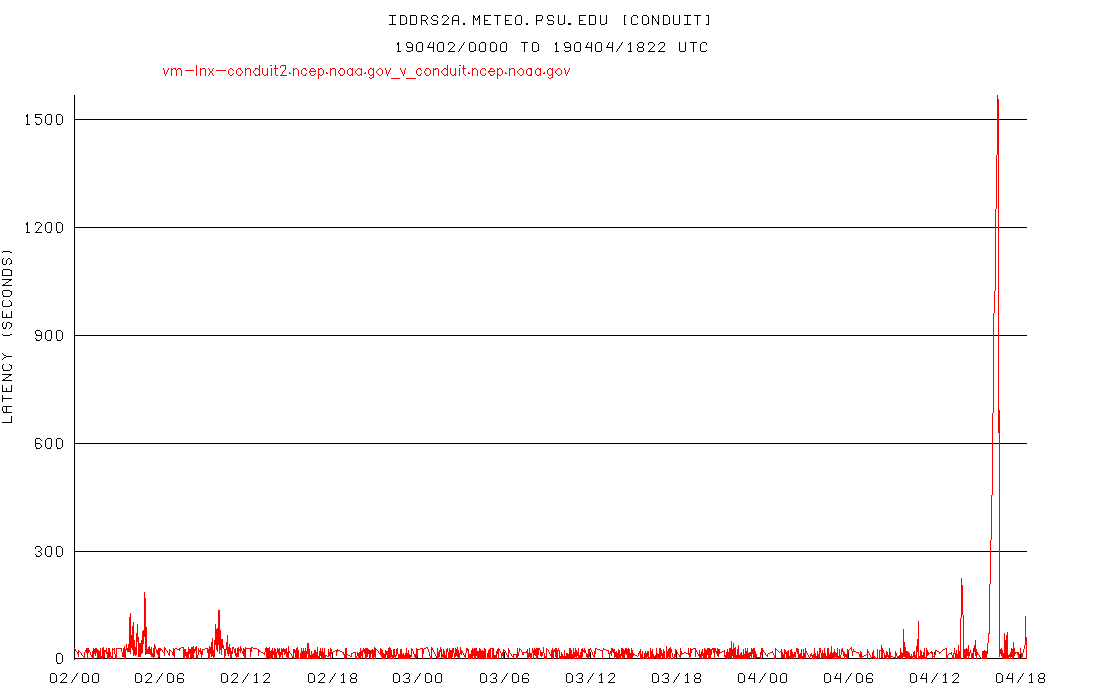

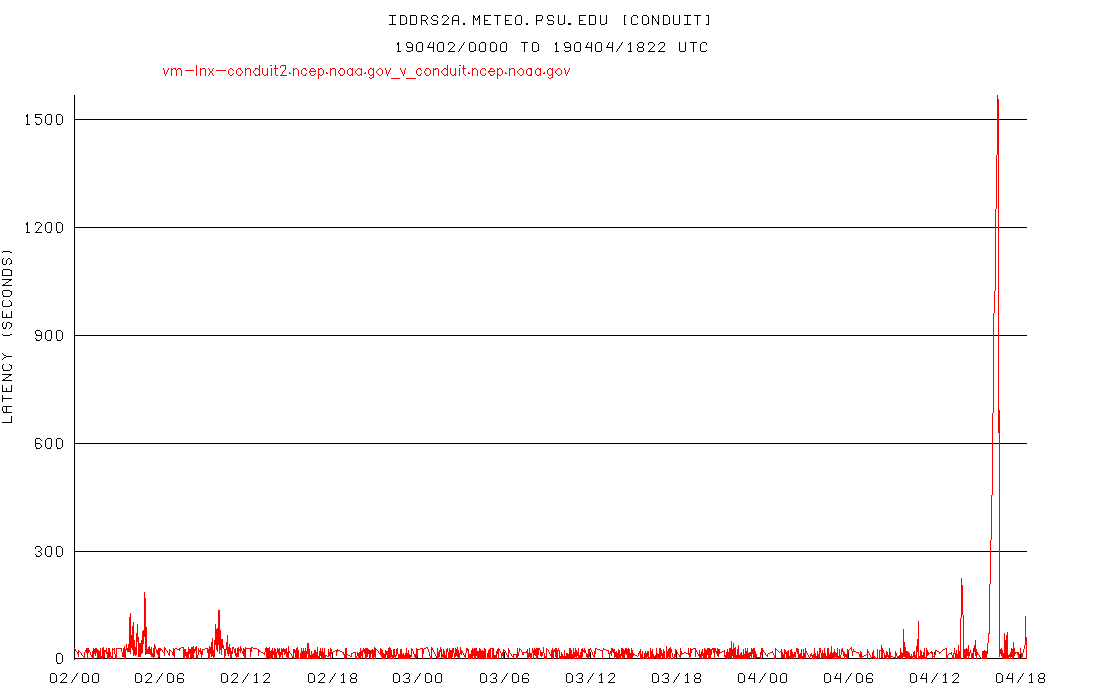

I switched our test system iddrs2a feeding from conduit.ncep.noaa.gov back to a 2-way split (from a 20-way split) yesterday to see how it would hold up:

While not as good as prior to February, it wasn't terrible, at least until this morning. Looks like the 20-way split may be the solution going forward if this is the "new normal"

for network performance.

Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: Pete Pokrandt <address@hidden>

Sent: Saturday, April 20, 2019 12:29 AM

To: Person, Arthur A.; Gilbert Sebenste

Cc: Kevin Goebbert; address@hidden; _NCEP.List.pmb-dataflow; Mike Zuranski; Derek VanPelt - NOAA Affiliate; Dustin Sheffler - NOAA Federal; address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

Well, I haven't changed anything in the past few days, but my lags dropped back to pretty much pre-February 10 levels starting with today's (20190419) 12 UTC run. I know Unidata switched to a 20 way split feed around that same time... I am still running a 10-way

split. I didn't change anything between today's 06 UTC run and the 12 UTC run, but the lags dropped considerably, and look like they used to.

I wonder if some bad piece of hardware got swapped out somewhere, or if some change was made internally at NCEP that fixed whatever was going on. Or, perhaps the Unidata switch to a 20 way feed somehow reduced a load on a router somewhere and data is getting

through more easily?

Strange..

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Person, Arthur A. <address@hidden>

Sent: Thursday, April 18, 2019 2:20 PM

To: Gilbert Sebenste; Pete Pokrandt

Cc: Kevin Goebbert; address@hidden; _NCEP.List.pmb-dataflow; Mike Zuranski; Derek VanPelt - NOAA Affiliate; Dustin Sheffler - NOAA Federal; address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

All --

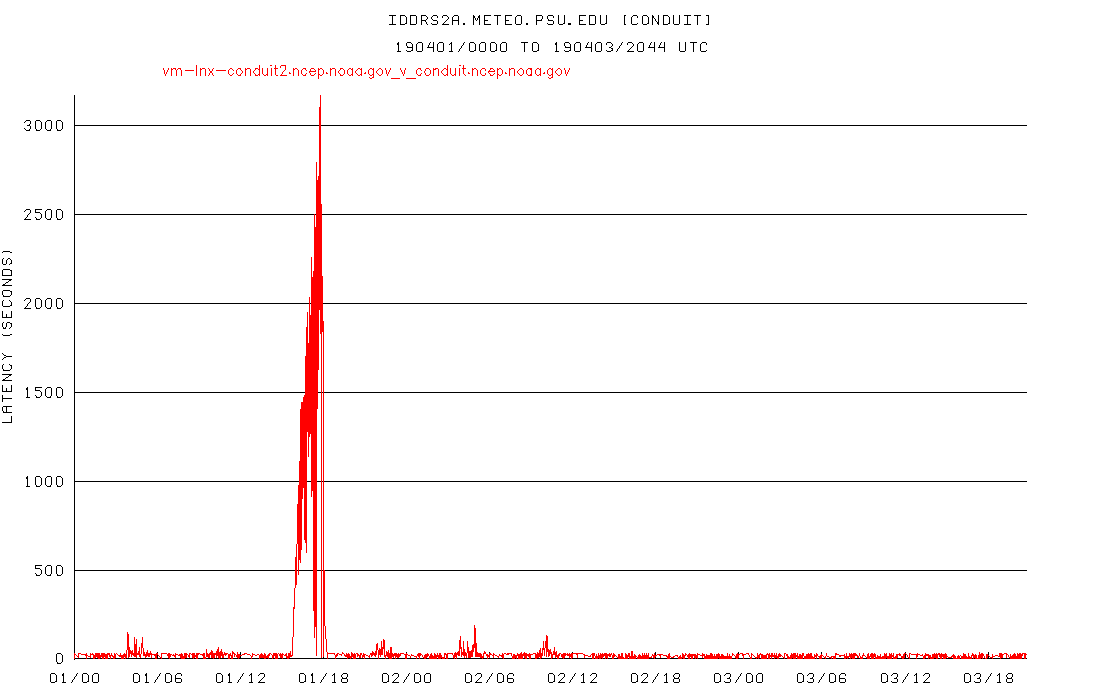

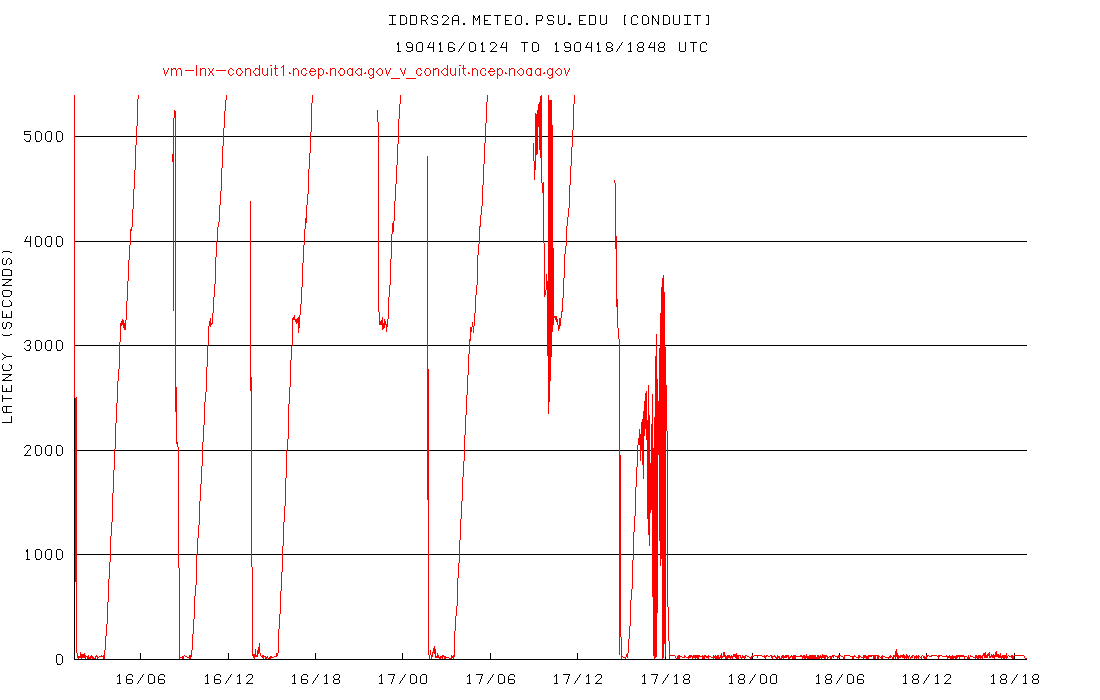

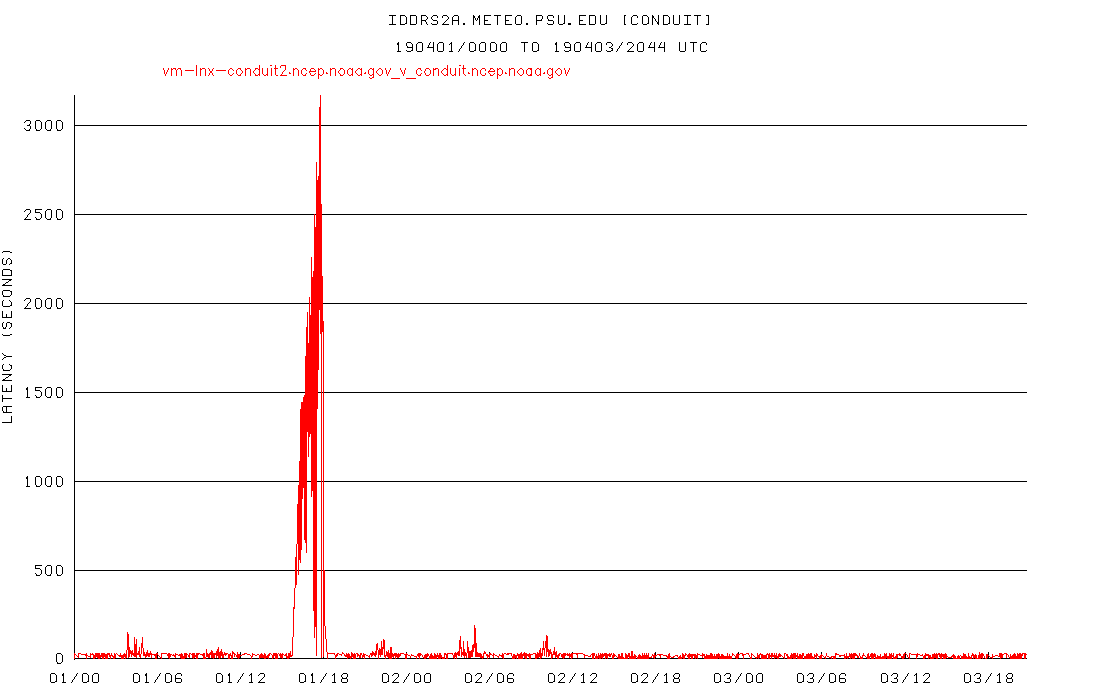

I switched our test system, iddrs2a, feeding from conduit.ncep.noaa.gov from a 2-way split to a 20-way split yesterday, and the results are dramatic:

Although conduit feed performance at other sites improved a little last night with the MRMS feed failure, it doesn't explain this improvement entirely. This leads me to ponder the causes of such an improvement:

1) The network path does not appear to be bandwidth constrained, otherwise there would be no improvement no matter how many pipes were used;

2) The problem, therefore, would appear to be packet oriented, either with path packet saturation, or packet shaping.

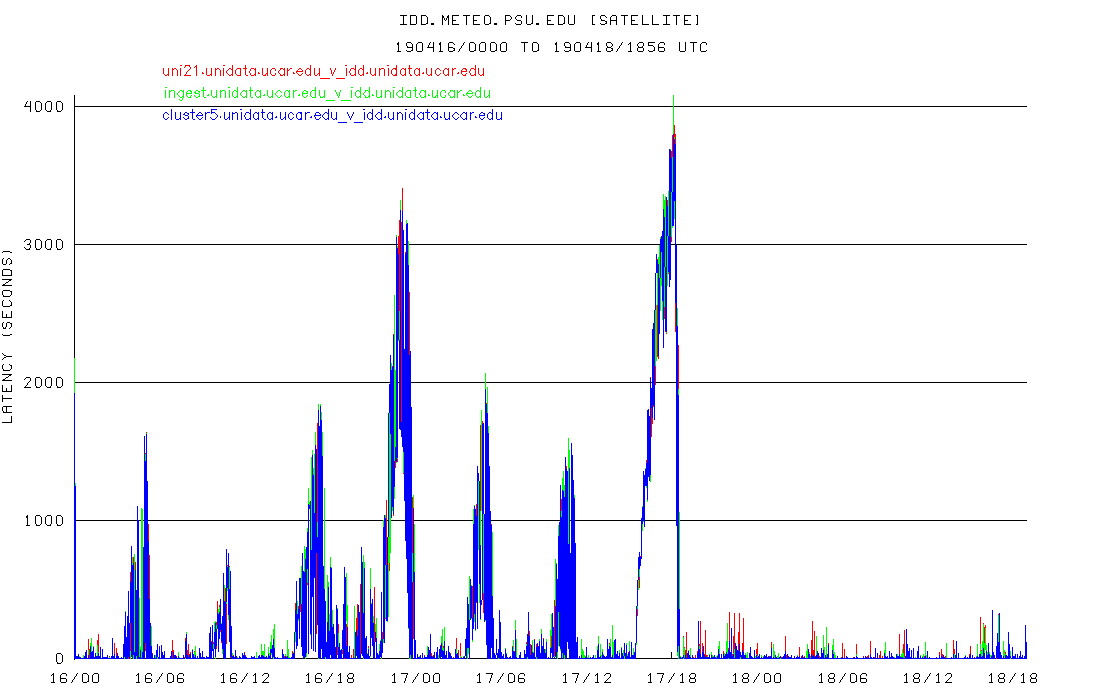

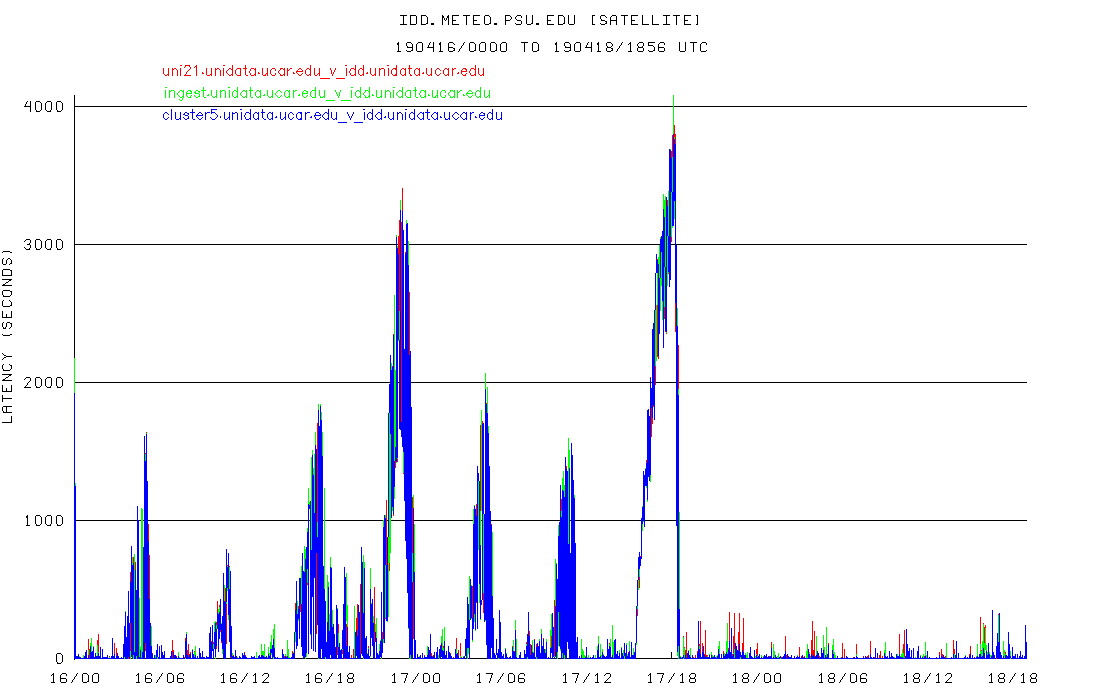

I'm not a networking expert, so maybe I'm missing another possibility here, but I'm curious whether packet shaping could account for some of the throughput issues. I've also been having trouble getting timely delivery of our Unidata IDD satellite feed, and

discovered that switching that to a 10-way split feed (from a 2-way split) has reduced the latencies from 2000-3000 seconds down to less than 300 seconds. Interestingly, the peak satellite feed latencies (see below) occur at the same time as the peak conduit

latencies, but this path is unrelated to NCEP (as far as I know). Is it possible that Internet 2 could be packet-shaping their traffic and that this could be part of the cause for the packet latencies we're seeing?

Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: address@hidden <address@hidden> on behalf of Gilbert Sebenste <address@hidden>

Sent: Thursday, April 18, 2019 2:29 AM

To: Pete Pokrandt

Cc: Kevin Goebbert; address@hidden; _NCEP.List.pmb-dataflow; Mike Zuranski; Derek VanPelt - NOAA Affiliate; Dustin Sheffler - NOAA Federal; address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

FYI: all evening and into the overnight, MRMS data has been missing, QC BR has been town for the last 40 minutes, but smaller products are coming through somewhat more reliably as of 6Z. CONDUIT was still substantially delayed around 4Z with

the GFS.

Gilbert

Here's a few traceroutes from just now - from

idd-agg.aos.wisc.edu to

conduit.ncep.noaa.gov. The lags are up and running around 600-800 seconds right now. I'm not including all of the * * * lines from after 144.90.76.65 which is presumably behind a firewall.

2209 UTC Tuesday Apr 16

2 128.104.4.129 (128.104.4.129) 1.700 ms 1.737 ms 1.772 ms

5 144.92.254.229 (144.92.254.229) 11.530 ms 11.472 ms 11.535 ms

10 140.208.63.30 (140.208.63.30) 134.030 ms 126.195 ms 126.305 ms

11 140.90.76.65 (140.90.76.65) 106.810 ms 104.553 ms 104.603 ms

2230 UTC Tuesday Apr 16

traceroute -p 388

conduit.ncep.noaa.gov

2 128.104.4.129 (128.104.4.129) 6.917 ms 6.895 ms 2.004 ms

5 144.92.254.229 (144.92.254.229) 6.909 ms 13.255 ms 6.863 ms

10 140.208.63.30 (140.208.63.30) 25.677 ms 25.767 ms 29.543 ms

11 140.90.76.65 (140.90.76.65) 105.812 ms 105.345 ms 108.857

2232 UTC Tuesday Apr 16

traceroute -p 388

conduit.ncep.noaa.gov

2 128.104.4.129 (128.104.4.129) 1.915 ms 2.652 ms 2.775 ms

5 144.92.254.229 (144.92.254.229) 6.891 ms 6.838 ms 6.840 ms

10 140.208.63.30 (140.208.63.30) 25.361 ms 25.427 ms 25.240 ms

11 140.90.76.65 (140.90.76.65) 113.194 ms 115.553 ms 115.543 ms

2234 UTC Tuesday Apr 16

traceroute -p 388

conduit.ncep.noaa.gov

2 128.104.4.129 (128.104.4.129) 1.645 ms 1.948 ms 1.729 ms

5 144.92.254.229 (144.92.254.229) 6.241 ms 6.240 ms 6.220 ms

10 140.208.63.30 (140.208.63.30) 25.199 ms 25.284 ms 25.351 ms

11 140.90.76.65 (140.90.76.65) 118.314 ms 118.707 ms 118.768 ms

2236 UTC Tuesday Apr 16

traceroute -p 388

conduit.ncep.noaa.gov

2 128.104.4.129 (128.104.4.129) 1.517 ms 1.630 ms 1.734 ms

5 144.92.254.229 (144.92.254.229) 6.384 ms 6.317 ms 6.314 ms

10 140.208.63.30 (140.208.63.30) 25.310 ms 25.268 ms 25.401 ms

11 140.90.76.65 (140.90.76.65) 118.299 ms 123.763 ms 122.207 ms

2242 UTC

traceroute -p 388

conduit.ncep.noaa.gov

2 128.104.4.129 (128.104.4.129) 6.039 ms 5.778 ms 1.813 ms

5 144.92.254.229 (144.92.254.229) 10.369 ms 6.626 ms 10.281 ms

10 140.208.63.30 (140.208.63.30) 85.763 ms 85.768 ms 83.623 ms

11 140.90.76.65 (140.90.76.65) 131.912 ms 132.662 ms 132.340 ms

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From:

address@hidden <address@hidden> on behalf of Pete Pokrandt <address@hidden>

Sent: Tuesday, April 16, 2019 3:04 PM

To: Gilbert Sebenste; Tyle, Kevin R

Cc: Kevin Goebbert; address@hidden; _NCEP.List.pmb-dataflow; Derek VanPelt - NOAA Affiliate; Mike Zuranski; Dustin Sheffler - NOAA Federal;

address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

At UW-Madison, we had incomplete 12 UTC GFS data starting with the 177h forecast. Lags exceeded 3600s.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Gilbert Sebenste <address@hidden>

Sent: Tuesday, April 16, 2019 2:44 PM

To: Tyle, Kevin R

Cc: Pete Pokrandt; Dustin Sheffler - NOAA Federal; Mike Zuranski; Derek VanPelt - NOAA Affiliate; Kevin Goebbert;

address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

Yes, here at AllisonHouse too...we can feed from a number of sites, and all of them were dropping GFS, and delayed by an hour.

Gilbert

For what it's worth, our 12Z GFS data ingest was quite bad today ... many lost products beyond F168 (we feed from UWisc-MSN primary and PSU secondary).

_____________________________________________

Kevin Tyle, M.S.; Manager of Departmental Computing

Dept. of Atmospheric & Environmental Sciences

University at Albany

Earth Science 235, 1400 Washington Avenue

Albany, NY 12222

Email: address@hidden

Phone: 518-442-4578

_____________________________________________

From:

address@hidden <address@hidden> on behalf of Pete Pokrandt <address@hidden>

Sent: Tuesday, April 16, 2019 12:00 PM

To: Dustin Sheffler - NOAA Federal; Mike Zuranski

Cc: Kevin Goebbert; address@hidden; Derek VanPelt - NOAA Affiliate; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

All,

Just keeping this in the foreground.

CONDUIT lags continue to be very large compared to what they were previous to whatever changed back in February. Prior to that, we rarely saw lags more than ~300s. Now they are routinely 1500-2000s at UW-Madison and Penn State, and over 3000s at Unidata -

they appear to be on the edge of losing data. This does not bode well with all of the IDP applications failing back over to CP today..

Can we send you some traceroutes and you back to us to maybe try to isolate where in the network this is happening? It feels like congestion or a bad route somewhere - the lags seem to be worse on weekdays than weekends if that helps at all.

Here are the current CONDUIT lags to UW-Madison, Penn State and Unidata.

<iddstats_CONDUIT_idd_meteo_psu_edu_ending_20190416_1600UTC.gif>

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

Hi Mike,

Thanks for the feedback on NOMADS. We recently found a slowness issue when NOMADS is running out of our Boulder data center that is being worked on by our teams now that NOMADS is live out of the College Park data center. It's hard sometimes to quantify

whether slowness issues that are only being reported by a handful of users is a result of something wrong in our data center, a bad network path between a customer (possibly just from a particular region of the country) and our data center, a local issue on

the customers' end, or any other reason that might cause slowness.

Conduit is only ever run from our College Park data center. It's slowness is not tied into the Boulder NOMADS issue, but it does seem to be at least a little bit tied to which of our data centers NOMADS is running out of. When NOMADS is in Boulder

along with the majority of our other NCEP applications, the strain on the College Park data center is minimal and Conduit appears to be running better as a result. When NOMADS runs in College Park (as it has since late yesterday) there is more strain on the

data center and Conduit appears (based on provided user graphs) to run a bit worse around peak model times as a result. These are just my observations and we are still investigating what may have changed that caused the Conduit latencies to appear in the first

place so that we can resolve this potential constraint.

-Dustin

Hi everyone,

I've avoided jumping into this conversation since I don't deal much with Conduit these days, but Derek just mentioned something that I do have some applicable feedback on...

> Two items happened last night. 1. NOMADS was moved back to College Park...

We get nearly all of our model data via NOMADS. When it switched to Boulder last week we saw a significant drop in download speeds, down to a couple hundred KB/s or slower. Starting last night, we're back to speeds on the order of MB/s or tens of MB/s. Switching

back to College Park seems to confirm for me something about routing from Boulder was responsible. But again this was all on NOMADS, not sure if it's related to happenings on Conduit.

When I noticed this last week I sent an email to

address@hidden including a traceroute taken at the time, let me know if you'd like me to find that and pass it along here or someplace else.

-Mike

======================

Mike Zuranski

Meteorology Support Analyst

College of DuPage - Nexlab

======================

On Tue, Apr 9, 2019 at 10:51 AM Person, Arthur A. < address@hidden> wrote:

Derek,

Do we know what change might have been made around February 10th when the CONDUIT problems first started happening? Prior to that time, the CONDUIT feed had been very crisp for a long period of time.

Thanks... Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: Derek VanPelt - NOAA Affiliate <address@hidden>

Sent: Tuesday, April 9, 2019 11:34 AM

To: Holly Uhlenhake - NOAA Federal

Cc: Carissa Klemmer - NOAA Federal; Person, Arthur A.; Pete Pokrandt; _NCEP.List.pmb-dataflow;

address@hidden;

address@hidden

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Hi all,

Two items happened last night.

1. NOMADS was moved back to College Park, which means there was a lot more traffic going out which will have effect on the Conduit latencies. We do not have a full load from the COllege Park Servers as many of the other applications are still running

from Boulder, but NOMADS will certainly increase overall load.

2. As Holly said, there were further issues delaying and changing the timing of the model output yesterday afternoon/evening. I will be watching from our end, and monitoring the Unidata 48 hour graph (thank you for the link) throughout the day,

Please let us know if you have questions or more information to help us analyse what you are seeing.

Thank you,

Derek

On Tue, Apr 9, 2019 at 6:50 AM Holly Uhlenhake - NOAA Federal < address@hidden> wrote:

Hi Pete,

We also had an issue on the supercomputer yesterday where several models going to conduit would have been stacked on top of each other instead of coming out in a more spread out fashion. It's not inconceivable that conduit could have backed up working

through the abnormally large glut of grib messages. Are things better this morning at all?

Thanks,

Holly

Something changed starting with today's 18 UTC model cycle, and our lags shot up to over 3600 seconds, where we started losing data. They are growing again now with the 00 UTC cycle as well. PSU and Unidata CONDUIT stats show similar abnormally large lags.

FYI.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Person, Arthur A. <address@hidden>

Sent: Friday, April 5, 2019 2:10 PM

To: Carissa Klemmer - NOAA Federal

Cc: Pete Pokrandt; Derek VanPelt - NOAA Affiliate; Gilbert Sebenste;

address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: Large lags on CONDUIT feed - started a week or so ago

Carissa,

The Boulder connection is definitely performing very well for CONDUIT. Although there have been a couple of little blips (~ 120 seconds) since yesterday, overall the performance is superb. I don't think it's quite as clean as prior to the ~February 10th date when

the D.C. connection went bad, but it's still excellent performance. Here's our graph now with a single connection (no splits):

My next question is: Will CONDUIT stay pointing at Boulder until D.C. is fixed, or might you be required to switch back to D.C. at some point before that?

Thanks... Art

Arthur

A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: Carissa Klemmer - NOAA Federal <address@hidden>

Sent: Thursday, April 4, 2019 6:22 PM

To: Person, Arthur A.

Cc: Pete Pokrandt; Derek VanPelt - NOAA Affiliate; Gilbert Sebenste;

address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: Large lags on CONDUIT feed - started a week or so ago

Catching up here.

Derek,

Do we have traceroutes from all users? Does anything in VCenter show any system resource constraints?

On Thursday, April 4, 2019, Person, Arthur A. < address@hidden> wrote:

Yeh, definitely looks "blipier" starting around 7Z this morning, but nothing like it was before. And all last night was clean. Here's our graph with a 2-way split, a huge improvement over what it was before the switch to Boulder:

Agree with Pete that this morning's data probably isn't a good test since there were other factors. Since this seems so much better, I'm going to try switching to no split as an experiment and see how it holds up.

Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: Pete Pokrandt <address@hidden>

Sent: Thursday, April 4, 2019 1:51 PM

To: Derek VanPelt - NOAA Affiliate

Cc: Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate;

address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: [Ncep.list.pmb-dataflow] [conduit] Large lags on CONDUIT feed - started a week or so ago

Ah, so perhaps not a good test.. I'll set it back to a 5-way split and see how it looks tomorrow.

Thanks for the info,

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Derek VanPelt - NOAA Affiliate <address@hidden>

Sent: Thursday, April 4, 2019 12:38 PM

To: Pete Pokrandt

Cc: Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate;

address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: [Ncep.list.pmb-dataflow] [conduit] Large lags on CONDUIT feed - started a week or so ago

HI Pete -- we did have a separate issu hit the CONDUIT feed today. We should be recovering now, but the backlog was sizeable. If these numbers are not back to the baseline in the next hour or so please let us know. We are also watching our queues and

they are decreasing, but not as quickly as we had hoped.

Thank you,

Derek

On Thu, Apr 4, 2019 at 1:26 PM 'Pete Pokrandt' via _NCEP list.pmb-dataflow < address@hidden> wrote:

FYI - there is still a much larger lag for the 12 UTC run with a 5-way split compared to a 10-way split. It's better since everything else failed over to Boulder, but I'd venture to guess that's not the root of the problem.

Prior to whatever is going on to cause this, I don'r recall ever seeing lags this large with a 5-way split. It looked much more like the left hand side of this graph, with small increases in lag with each 6 hourly model run cycle, but more like 100 seconds

vs the ~900 that I got this morning.

FYI I am going to change back to a 10 way split for now.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From:

address@hidden <address@hidden> on behalf of Pete Pokrandt <address@hidden>

Sent: Wednesday, April 3, 2019 4:57 PM

To: Person, Arthur A.; Gilbert Sebenste; Anne Myckow - NOAA Affiliate

Cc: address@hidden; _NCEP.List.pmb-dataflow;

address@hidden

Subject: Re: [conduit] [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

Sorry, was out this morning and just had a chance to look into this. I concur with Art and Gilbert that things appear to have gotten better starting with the failover of everything else to Boulder yesterday. I will also reconfigure to go back to a 5-way split

(as opposed to the 10-way split that I've been using since this issue began) and keep an eye on tomorrow's 12 UTC model run cycle - if the lags go up, it usually happens worst during that cycle, shortly before 18 UTC each day.

I'll report back tomorrow how it looks, or you can see at

Thanks,

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

Anne,

I'll hop back in the loop here... for some reason these replies started going into my junk file (bleh). Anyway, I agree with Gilbert's assessment. Things turned real clean around 12Z yesterday, looking at the graphs. I usually

look at

flood.atmos.uiuc.edu when there are problem as their connection always seems to be the cleanest. If there are even small blips or ups and downs in their latencies, that usually means there's a network aberration somewhere that usually amplifies into hundreds

or thousands of seconds at our site and elsewhere. Looking at their graph now, you can see the blipiness up until 12Z yesterday, and then it's flat (except for the one spike around 16Z today which I would ignore):

<pastedImage.png>

Our direct-connected site, which is using a 10-way split right now, also shows a return to calmness in the latencies:

Prior to the recent latency jump, I did not use split requests and the reception had been stellar for quite some time. It's my suspicion that this is a networking congestion issue somewhere close to the source since it seems to affect all downstream sites.

For that reason, I don't think solving this problem should necessarily involve upgrading your server software, but rather identifying what's jamming up the network near D.C., and testing this by switching to Boulder was an excellent idea. I will now try switching

our system to a two-way split to see if this performance holds up with fewer pipes. Thanks for your help and I'll let you know what I find out.

Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

On Wed, Apr 3, 2019 at 10:03 AM Anne Myckow - NOAA Affiliate < address@hidden> wrote:

Hi Pete,

As of yesterday we failed almost all of our applications to our site in Boulder (meaning away from CONDUIT). Have you noticed an improvement in your speeds since yesterday afternoon? If so this will give us a clue that maybe there's something interfering

on our side that isn't specifically CONDUIT, but another app that might be causing congestion. (And if it's the same then that's a clue in the other direction.)

Thanks,

Anne

The lag here at UW-Madison was up to 1200 seconds today, and that's with a 10-way split feed. Whatever is causing the issue has definitely not been resolved, and historically is worse during the work week than on the weekends. If that helps at all.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Anne Myckow - NOAA Affiliate <address@hidden>

Sent: Thursday, March 28, 2019 4:28 PM

To: Person, Arthur A.

Cc: Carissa Klemmer - NOAA Federal; Pete Pokrandt; _NCEP.List.pmb-dataflow;

address@hidden;

address@hidden

Subject: Re: [Ncep.list.pmb-dataflow] Large lags on CONDUIT feed - started a week or so ago

We will not be upgrading to version 6.13 on these systems as they are not robust enough to support the local logging inherent in the new version.

I will check in with my team on if there are any further actions we can take to try and troubleshoot this issue, but I fear we may be at the limit of our ability to make this better.

I’ll let you know tomorrow where we stand. Thanks.

Anne

On Mon, Mar 25, 2019 at 3:00 PM Person, Arthur A. < address@hidden> wrote:

Carissa,

Can you report any status on this inquiry?

Thanks... Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

From: Carissa Klemmer - NOAA Federal <address@hidden>

Sent: Tuesday, March 12, 2019 8:30 AM

To: Pete Pokrandt

Cc: Person, Arthur A.;

address@hidden;

address@hidden; _NCEP.List.pmb-dataflow

Subject: Re: Large lags on CONDUIT feed - started a week or so ago

Hi Everyone

I’ve added the Dataflow team email to the thread. I haven’t heard that any changes were made or that any issues were found. But the team can look today and see if we have any signifiers of overall slowness with anything.

Dataflow, try taking a look at the new Citrix or VM troubleshooting tools if there are any abnormal signatures that may explain this.

On Monday, March 11, 2019, Pete Pokrandt < address@hidden> wrote:

Art,

I don't know if NCEP ever figured anything out, but I've been able to keep my latencies reasonable (300-600s max, mostly during the 12 UTC model suite) by splitting my CONDUIT request 10 ways, instead of the 5 that I had been doing, or in a single request.

Maybe give that a try and see if it helps at all.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

From: Person, Arthur A. <address@hidden>

Sent: Monday, March 11, 2019 3:45 PM

To: Holly Uhlenhake - NOAA Federal; Pete Pokrandt

Cc: address@hidden;

address@hidden

Subject: Re: [conduit] Large lags on CONDUIT feed - started a week or so ago

Holly,

Was there any resolution to this on the NCEP end? I'm still seeing terrible delays (1000-4000 seconds) receiving data from

conduit.ncep.noaa.gov. It would be helpful to know if things are resolved at NCEP's end so I know whether to look further down the line.

Thanks... Art

Arthur A. Person

Assistant Research Professor, System Administrator

Penn State Department of Meteorology and Atmospheric Science

email: address@hidden,

phone: 814-863-1563

Hi Pete,

We'll take a look and see if we can figure out what might be going on. We haven't done anything to try and address this yet, but based on your analysis I'm suspicious that it might be tied to a resource constraint on the VM or the blade it resides on.

Thanks,

Holly Uhlenhake

Acting Dataflow Team Lead

On Thu, Feb 21, 2019 at 11:32 AM Pete Pokrandt < address@hidden> wrote:

Just FYI, data is flowing, but the large lags continue.

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

Data is flowing again - picked up somewhere in the GEFS. Maybe CONDUIT server was restarted, or ldm on it? Lags are large (3000s+) but dropping slowly

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

Just a quick follow-up - we started falling far enough behind (3600+ sec) that we are losing data. We got short files starting at 174h into the GFS run, and only got (incomplete) data through 207h.

We have now not received any data on CONDUIT since 11:27 AM CST (1727 UTC) today (Wed Feb 20)

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

Carissa,

We have been feeding CONDUIT using a 5 way split feed direct from

conduit.ncep.noaa.gov, and it had been really good for some time, lags 30-60 seconds or less.

However, the past week or so, we've been seeing some very large lags during each 6 hour model suite - Unidata is also seeing these - they are also feeding direct from

conduit.ncep.noaa.gov.

Any idea what's going on, or how we can find out?

Thanks!

Pete

--

Pete Pokrandt - Systems Programmer

UW-Madison Dept of Atmospheric and Oceanic Sciences

608-262-3086 - address@hidden

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

address@hidden

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/

--

Carissa Klemmer

NCEP Central Operations

IDSB Branch Chief

301-683-3835

_______________________________________________

Ncep.list.pmb-dataflow mailing list

address@hidden

https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow

--

Anne Myckow

Lead Dataflow Analyst

NOAA/NCEP/NCO

301-683-3825

--

Anne Myckow

Lead Dataflow Analyst

NOAA/NCEP/NCO

301-683-3825

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

address@hidden

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/

--

----

Gilbert Sebenste

Consulting Meteorologist

AllisonHouse, LLC

_______________________________________________

Ncep.list.pmb-dataflow mailing list

address@hidden

https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow

--

Derek Van Pelt

DataFlow Analyst

NOAA/NCEP/NCO

--

Carissa Klemmer

NCEP Central Operations

IDSB Branch Chief

301-683-3835

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

address@hidden

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/

--

Derek Van Pelt

DataFlow Analyst

NOAA/NCEP/NCO

--

Misspelled straight from Derek's phone.

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

address@hidden

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/

_______________________________________________

Ncep.list.pmb-dataflow mailing list

address@hidden

https://www.lstsrv.ncep.noaa.gov/mailman/listinfo/ncep.list.pmb-dataflow

--

Dustin Sheffler

NCEP Central Operations - Dataflow

5830 University Research Court, Rm 1030

College Park, Maryland 20740

_______________________________________________

NOTE: All exchanges posted to Unidata maintained email lists are

recorded in the Unidata inquiry tracking system and made publicly

available through the web. Users who post to any of the lists we

maintain are reminded to remove any personal information that they

do not want to be made public.

conduit mailing list

address@hidden

For list information or to unsubscribe, visit:

http://www.unidata.ucar.edu/mailing_lists/

|